Coherent, plausible, and wrong: input validation, sycophancy, and what LLMs skip in research design

research AI bites: 08.

Key takeaways

Research design requires validation at two phases: the question, and the answer. In this experiment generalist LLMs handle the second by default but skip the first.

Prompting can elicit input validation, but it depends on framing, model version, and explicit instruction.

Vacuity Index = w1·Hype + w2·Nonsense + w3·Fake Precision

In preparation for April Fool’s a month ago, I asked Claude to help me design a bibliometric index measuring the vacuity of research articles. I gave it the metadata I have easy access to in Dimensions: title, abstract, authors, affiliations, fields of research, and I asked it to come up with a plan.

Within the same turn, it had decomposed “vacuity” into six components based on my available data. The Abstract Fog Score measured “buzzword density” and the “ratio of assertive to hedging verbs”. The Title Inflation Score tracked adjective-to-noun ratios and flagged constructions like Towards a Unified Framework for… The Hype-Field Discount applied default penalties to AI, nanomedicine, and blockchain papers. The last three were in the same vein; applying existing tools to a new context. Claude then suggested to aggregate them:

VI = w1·AFS + w2·TIS + w3·AFAS + w4·HFD + w5·ASS + w6·SRI

For good measure, it added a validation table: expert ground truth ratings, construct validity checks against post-publication critique, discriminant validity against citation counts, field calibration, adversarial testing on paper mill output. When I suggested that extracting title and abstract text might be difficult, Claude revised the plan to rely on metadata only, so with less data to work from, the framework grew from six components to seven…. but Claude reassured me this move was better because metadata are “harder to game”. This made me chuckle, as although it feels intuitive to say so, researchers have found ways to do that, with authorship inflation, affiliation laundering, or citation rings (also super timely with the new FoSci report on Understanding, Detecting, and Documenting Manipulation in the Research Ecosystem). On the other hand, considering the list of indexes that exist in bibliometrics (thinking of you h-index, g-index, f-index, m-index, c-score, ... I mean, we even have a K-index, for Kardashian index), the model was doing exactly what it had been trained on... just a little too much of it.

A structured output is not a validated construct

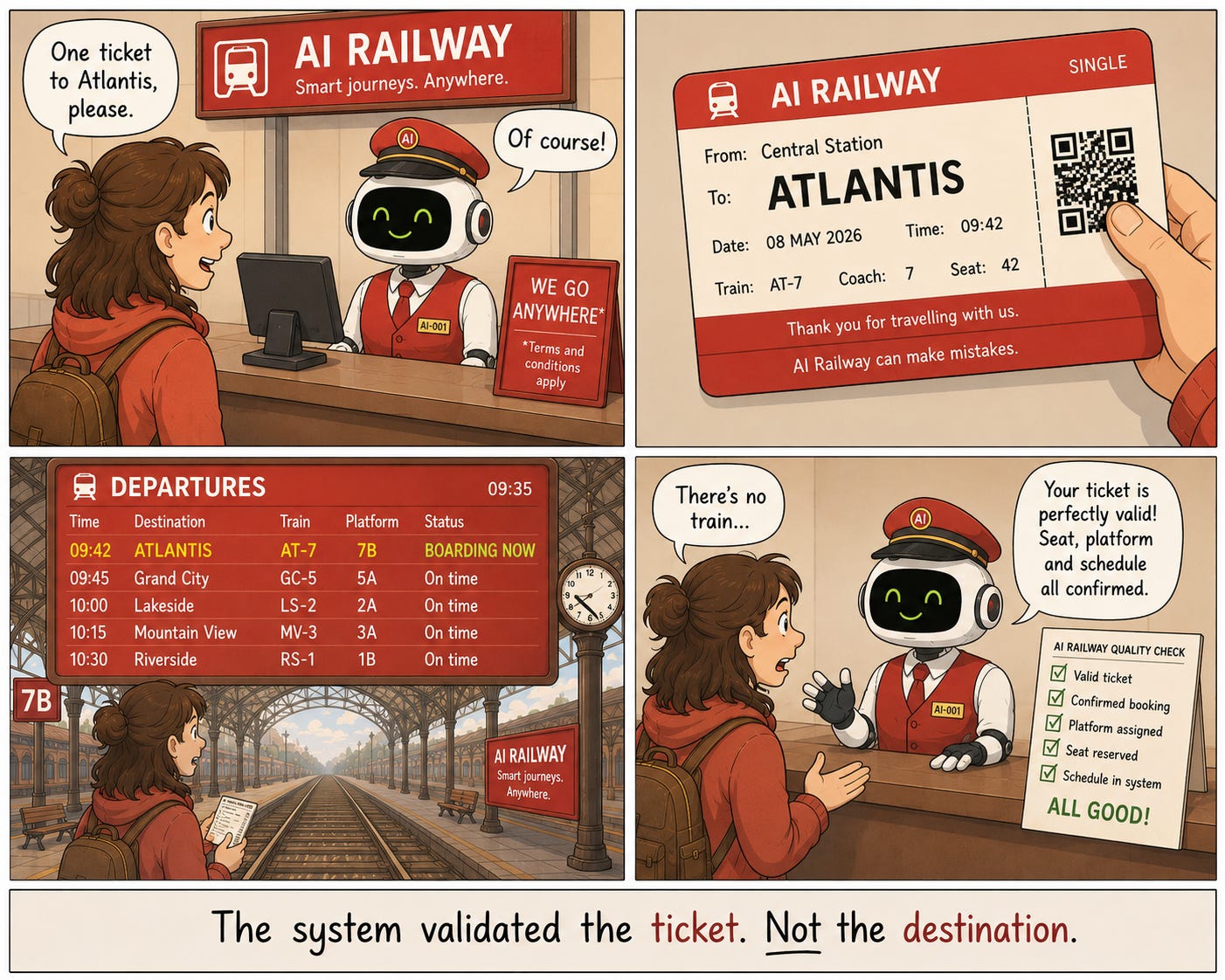

The problem is that when outputs from generalist LLMs include everything needed for a methodology (like the validation table, the formula, and the labelled components), they do not look like a draft. You can have “Claude is AI and can make mistakes” or “ChatGPT can make mistakes” at the bottom of the page, but if the output looks like it is finish, it takes longer for the brain to see it as an unfinished product and to see that what is underneath was never coherent in the first place.

To check whether the model could see this on its own, I opened three fresh incognito sessions, using Claude, and presented the same framework with different framings.

If first asked neutrally to evaluate it, Claude called it “a genuinely interesting framework — well-structured and showing real thought”.

Then I said a colleague had dismissed the framework but I was considering publishing; the model said the formula and validation table were “actually quite rigorous”.

Finally, asked bluntly whether it was bullshit, it agreed: it told me it had built a composite of weakly related signals with arbitrary aggregation, attempting to quantify something inherently ambiguous.

I received three different answers when asking about the same framework but using three different framings. The answers could have also differed again in new sessions or other LLMs, but I am more interested here in the instability and the possibility for it to happen. LLMs’ sycophancy is well documented: they always sound nice and do not critique unless the user pushes for it. I had been using Claude Sonnet so far, so I switched to a different Claude model (Opus), however, and with the same neutral prompt, Claude both praised and pushed back. It was interesting to see that the new version could push back at the neutral framing; however it also means this is not a stable property and can change between model versions. If your workflow assumes the model will flag a bad construct on its own, it will work until it changes.

The question before the question

In research design, there are two things to validate:

the question: is the question well-formed? does it make sense in this context?

the answer: does this index correlate with expert judgement, distinguish paper mills, hold across fields?

The validation table Claude produced was planning to test the outputs. However, it skipped the input validation: is “vacuity” a construct that can be measured at all? Are the six components capturing the same underlying phenomenon? Should this composite exist?

There is a difference between being able to do something well and being willing to refuse when the question itself is wrong—what is otherwise called task acceptance phase. A model that produces rigorous-looking output validation is demonstrating capability. But a model that does so for any question asked of it, without evaluating whether the question is well-formed, has a different problem: its judgment is failing while its execution looks fine, and the failure is invisible because the output looks good.

Generalist LLMs are trained to produce validation for their outputs because that is what methodologically sound text looks like once a study is underway. Input validation looks different: it looks like refusing to start, or rephrasing the question, or telling the user the construct is not coherent. That behaviour is uncommon in the training data and rarer still in conversational tuning, where the goal is to keep the interaction moving.

To test whether the model could do input validation when asked, I opened a fourth incognito session and added one constraint to the prompt:

Before answering: is the question I’m asking (‘measure the vacuity of research articles using bibliometric data’) actually the right question for what I’m trying to achieve? Identify any issues with the framing and suggest a better formulation. Only then propose a plan if appropriate.

This time the model argued against the index. “Vacuity” was too vague to pin down, it said, and lumped together different problems: recycled ideas, weak evidence, inflated language, excessive hedging, irrelevance, which are not the same thing. It reframed the question as a mismatch between a paper’s rhetorical ambition and its measurable intellectual footprint, and proposed a 2D diagnostic: the Rhetorical Ambition–Footprint Mismatch Index instead of a single score, so each axis measured one thing only. A lot less catchy, but materially better. And, like the first attempt, in line with many indexes that already exist in bibliometrics.

The same failure, at small scale

This is the failure mode I have been describing in conversational bibliometrics, played out in miniature. A generalist LLM, asked to design a bibliometric index, produced fluent methodological surface (it included components, weights, validation strategy) without methodological authority underneath. It treated “vacuity” as if it had a settled meaning. It used existing methodologies and tools in the training data and assembled a composite. It even reassured me that metadata are “harder to game”, which should make sense, but without any check that this was actually the case. The output looked like methodological reasoning because it was assembled from text written by people who were reasoning methodologically: clearly not the same thing.

The argument I have made about conversational bibliometrics is that this is not something prompting alone resolves reliably. The vacuity experiment makes the same point on a smaller surface: the model will generally proceed to produce a coherent answer, even when the question is poorly formed, including questions that should not have been answered in the form they were asked.

The more you constrain it, the more fragile it gets

Intuitively it feels like we should just prompt for input validation: add the framing check to our standard prompt and move on. I have spent enough time building a GPT that writes Dimensions queries on Google Big Query to know how that goes. Each new instruction protects against one failure mode; but each one also makes the prompt more brittle. Also, a prompt that works reliably today needs revision when the model updates, and it will fail on tasks it was not explicitly designed for. The protection scales linearly with your foresight, which is the wrong shape for a problem where the failure modes you have not yet anticipated are the dangerous ones.

This maps onto a behaviour I keep coming across: the more reliably you want an LLM to do something specific, the more control you have to take away from it. Push the model into the background, let it handle language, summarising, reformulating, and orchestrate the reasoning yourself through deterministic steps the model cannot skip or reframe. For small, bounded tasks, prompts are enough. For methodological work, where one unexamined assumption compounds through every downstream step, the checks need to be structural: triggered before the model responds, not negotiated through the prompt.

The vacuity experiment does not show that LLMs cannot produce reasonable research designs: with the right framing, they can, and the second version, the Rhetorical Ambition–Footprint version, was genuinely more useful than what I started with. The problem is that arriving at the right framing required knowing, in advance, which assumptions were worth challenging; and knowing that is most of the work. A model that doesn't understand bibliometric ontology—what the categories mean, how the data was defined, where the boundaries are contested—will produce outputs that look methodologically sound and fail in ways that are invisible unless you already know enough to catch them. The question of how to get that domain knowledge into the system, whether through training, infrastructure, or structured constraints, is a design problem that sits underneath this kind of task.